Multi-Modal Data Fusion: Integrating Text, Image, Audio, and Sensor Data in Real-Time Analytics Pipelines

Multi-Modal Data Fusion: Integrating Text, Image, Audio, and Sensor Data in Real-Time Analytics Pipelines

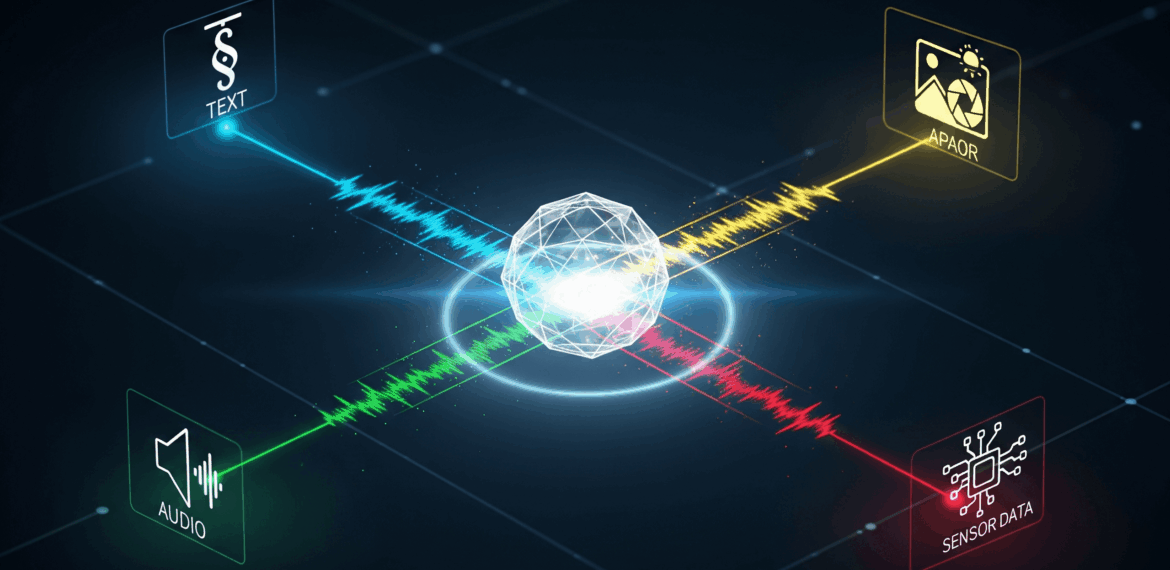

Organizations today are inundated with data from every imaginable source. A single business transaction may involve structured tabular records, accompanying documents, customer service audio, geolocation signals from a mobile device, and even surveillance camera footage. Each of these modalities of data- text, image, audio, and sensor streams- provides its own perspective.

The challenge? These datasets rarely exist in isolation. They are interconnected fragments of the same reality. When analyzed independently, they deliver only partial truths. But when fused together in real-time, they can power transformative insights- whether predicting equipment failures, detecting fraud, diagnosing diseases, or delivering hyper-personalized customer experiences.

This blog explores multi-modal data fusion in depth: what it is, how it works, the enabling technologies, real-world applications, the challenges, and how companies like Datahub Analytics help organizations implement it successfully.

Understanding Multi-Modal Data Fusion

What is Multi-Modal Data?

Multi-modal data refers to information captured through different channels or modes of sensing:

-

Text: Customer reviews, chat logs, reports, emails, documents, clinical notes.

-

Image & Video: Surveillance feeds, medical scans, defect detection in manufacturing, satellite imagery.

-

Audio: Customer support calls, vibration/acoustic signals from machinery, spoken commands in virtual assistants.

-

Sensor Data: IoT telemetry, GPS coordinates, accelerometer readings, temperature logs, biometric sensors.

Each modality has a different structure, storage requirement, and processing approach. Combining them is non-trivial but essential.

What is Data Fusion?

Data fusion is the process of integrating multiple data sources into a unified, coherent view. In multi-modal fusion, the goal is to combine complementary insights:

-

Redundancy elimination – Cross-verifying multiple modalities reduces noise.

-

Completeness – Filling information gaps by integrating what one modality misses.

-

Contextual richness – Adding layers of meaning by combining text semantics with visual or sensor signals.

Example:

A medical AI analyzing an X-ray (image) might flag an anomaly. But when fused with a patient’s vitals (sensor) and doctor’s notes (text), the system can offer a more accurate and context-aware diagnosis.

Why Real-Time Fusion is the Next Frontier

Batch analytics has served enterprises for years, but industries are moving toward real-time intelligence. With streaming data pipelines and low-latency infrastructure, the focus is shifting from “What happened yesterday?” to “What’s happening right now, and what should we do about it?”

Scenarios demanding real-time multi-modal fusion include:

-

Smart Cities: Traffic cameras, GPS data, and pollution sensors working together to optimize traffic flows.

-

Financial Services: Fraud detection combining biometric login, transaction velocity, and device geolocation in milliseconds.

-

Manufacturing: Predictive maintenance powered by vibration signals, heat sensors, and machine vision.

-

Healthcare: ICU monitoring blending vitals (sensors), radiology images, and clinical notes in real-time to detect patient deterioration.

The business edge lies not just in analyzing data, but in doing it instantly and taking automated action.

Fusion Techniques in Practice

Multi-modal fusion is not a single method but a family of strategies.

1. Early Fusion (Feature-Level Fusion)

-

Raw data features are combined before any learning occurs.

-

Example: In speech-to-text models, both the raw waveform and spectrogram features are merged with linguistic tokens.

-

Pros: Rich representation, strong interaction between modalities.

-

Cons: High computational complexity, requires alignment at the feature level.

2. Late Fusion (Decision-Level Fusion)

-

Each modality is processed separately. Predictions are combined later.

-

Example: Fraud detection where one model looks at text logs, another at transaction amounts, another at geolocation. Final decision merges all outputs.

-

Pros: Scalable, modular, easy to extend with new data sources.

-

Cons: May lose interdependencies between modalities.

3. Hybrid Fusion

-

Combines the best of both.

-

Example: Autonomous vehicles – lidar and radar fused at the sensor level, camera vision fused at decision level.

4. Deep Learning for Fusion

-

Multimodal Transformers (e.g., CLIP, Flamingo, GPT-4V): Aligning text and image embeddings in shared latent space.

-

Graph Neural Networks: Modeling relationships between heterogeneous data nodes.

-

Cross-Attention Mechanisms: Allowing modalities to “attend” to each other.

Architecture of a Real-Time Multi-Modal Pipeline

Let’s break down a modern fusion pipeline step by step:

1. Data Ingestion Layer

-

Tools: Apache Kafka, AWS Kinesis, Google Pub/Sub.

-

Streams: sensor telemetry, video feeds, audio inputs, text documents.

-

Requirements: high-throughput, low-latency capture.

2. Preprocessing & Transformation

-

Text: NLP techniques (tokenization, embeddings, sentiment extraction).

-

Images/Video: CNNs/transformers for object detection, scene segmentation.

-

Audio: Conversion to spectrograms, speech-to-text models, vibration analysis.

-

Sensors: Time-series smoothing, normalization, outlier detection.

3. Synchronization & Alignment

-

Timestamping across modalities.

-

Sliding windows for stream alignment.

-

Handling missing or delayed data packets.

4. Fusion & Modeling Layer

-

Multimodal deep learning architectures.

-

Ensemble models combining modality-specific learners.

-

Real-time scoring engines (TensorFlow Serving, TorchServe).

5. Storage Layer

-

Hot storage (Redis, in-memory caches) for real-time streams.

-

Cold storage (HDFS, S3, data lakes) for batch historical analysis.

6. Visualization & Action Layer

-

BI dashboards (Tableau, Power BI, custom real-time dashboards).

-

Integration with RPA or SOAR platforms for automated workflows.

Industry Case Studies

1. Healthcare: ICU Early Warning Systems

-

Modalities fused:

-

Sensor data: ECG, oxygen saturation.

-

Text: Doctor’s notes.

-

Images: X-rays.

-

-

Outcome: Predict sepsis or cardiac arrest hours earlier than traditional monitoring.

2. Smart Manufacturing

-

Modalities fused:

-

IoT sensors monitoring vibration/temperature.

-

Audio signals detecting abnormal machine noises.

-

Visual inspection via cameras.

-

-

Outcome: Reduced downtime through predictive maintenance, saving millions annually.

3. Retail Customer Analytics

-

Modalities fused:

-

Text: Customer reviews, chat logs.

-

Images: Store video for foot traffic analysis.

-

Sensor data: IoT beacons tracking customer movement.

-

-

Outcome: Improved store layouts and personalized offers.

4. Financial Fraud Detection

-

Modalities fused:

-

Text: Transaction metadata.

-

Images: Biometric verification (face ID).

-

Sensor: Geolocation/GPS.

-

-

Outcome: Sub-second fraud prevention in online banking.

5. Security & Defense

-

Modalities fused:

-

Drone video feeds.

-

Satellite imagery.

-

Ground sensors (motion, seismic).

-

-

Outcome: Real-time threat identification, improved mission intelligence.

Key Challenges

-

Data Heterogeneity

-

Different formats, resolutions, and sampling rates.

-

-

Synchronization

-

Aligning real-time streams with variable latencies.

-

-

Scalability

-

Managing petabytes of streaming image + audio data.

-

-

Model Complexity

-

Designing architectures that generalize across modalities.

-

-

Latency Constraints

-

Sub-second inference required in mission-critical applications.

-

-

Data Governance & Security

-

Handling sensitive data like medical scans or biometric authentication responsibly.

-

The Future of Multi-Modal Fusion

The field is evolving rapidly with transformer-based architectures that can natively handle multiple modalities, such as OpenAI’s GPT-4V, Google’s Gemini, and Meta’s ImageBind. These models open possibilities for:

-

Unified intelligence platforms: Single models ingesting text, images, audio, and sensors simultaneously.

-

Edge deployment: Running multi-modal analytics directly on IoT devices with 5G/6G connectivity.

-

Generative AI integration: Turning fused analytics into proactive decision-making (e.g., auto-generating patient reports from multi-modal health data).

How Datahub Analytics Can Help

At Datahub Analytics, we bring deep expertise in:

-

Multi-Modal Ingestion Pipelines: Kafka, Spark Streaming, and cloud-native solutions for scalable real-time capture.

-

AI/ML Modeling: Deploying multi-modal deep learning models, including transformers and hybrid fusion architectures.

-

Infrastructure Engineering: Building containerized, hybrid cloud setups for resilient, high-performance pipelines.

-

Visualization & Action Systems: Designing dashboards that don’t just present fused insights but trigger action.

-

Security & Compliance: Ensuring adherence to GDPR, HIPAA, and regional regulations while maintaining data quality.

Our clients in healthcare, finance, manufacturing, telecom, and government have leveraged multi-modal pipelines we built to improve decision-making, cut costs, and unlock new business models.

Conclusion

The next generation of analytics is multi-modal and real-time. Businesses that continue analyzing text, image, audio, and sensor data in isolation will always be missing context. The real competitive edge lies in fusing them- achieving contextual intelligence that is instant, actionable, and predictive.

By integrating these streams into real-time pipelines, organizations move from reactive reporting to proactive decision-making. Multi-modal fusion is not just an analytics upgrade- it’s a business transformation strategy.

And with partners like Datahub Analytics, the complexity of building these pipelines becomes an opportunity instead of a barrier.